The age of information can be a mentally overwhelming one, we often feel the plethora of information provided often contradicts itself regularly. This is particularly true when it comes to news sources, that often come with headlines that seem too good to be true. The truth is that it often is too good to be true. So how do I determine the strength of evidence within research?

The world of science is one of consistent hypotheses and experiments. The problem is that not all experiments yield the same results. Let us consider the following as an example:

“Consumption of chocolate shows to improve mental health”

Within this example, let’s assume researchers began by sampling 40 individuals. Assuming the population’s characteristics are fairly comparable; we follow this population of 40 for approximately one year.

From the observation, researchers found that the group who regularly consumed chocolate reported feeling happier and more resilient to external stresses than those who didn’t consume any chocolate.

Now let’s assume they run a number of statistical assessments that produce a p-value less than 0.05 (the commonly accepted threshold for significance).

With their findings they conclude that there is strong evidence in support of chocolate being a mood regulator, and should be prescribed to those suffering from bouts of low mood.

As a researcher, nutrition coach, or practicing nutritionist, would you determine the strength of this research to be adequate ?

If the answer you had was no, the question now now is…..why ?

They did the statistical assessments, they ran a fairly reasonable experiment; why are their findings not conclusive enough for you to include them into your practice as a nutritionist?

The answer to that is that it falls short of being the best evidence available. To understand what constitutes the best available evidence we need only turn to what researchers call “The Evidence Hierarchy” or “The Evidence Pyramid”

There are different evidence hierarchies dependent on the question or field of work. In this example I will talk solely on the epidemiological hierarchy which is foundational in most public health nutrition research. I will skip over animal or cell culture studies as they sit in a world of their own and are appraised differently.

Fundamentally, the hierarchy breaks down quantitative research literature into different categories according to strength.

At the higher levels are the stronger sources of evidence and as you work your way down, the strength of evidence becomes weaker and weaker.

When it comes to the practical application of research you want to apply the highest level of evidence available but that’s only dependent on what’s available and often researchers are forced to accept the limited available evidence that exists even if it’s not the best in terms of rank within the hierarchy.

Defining the strength of evidence within research

Meta Analysis

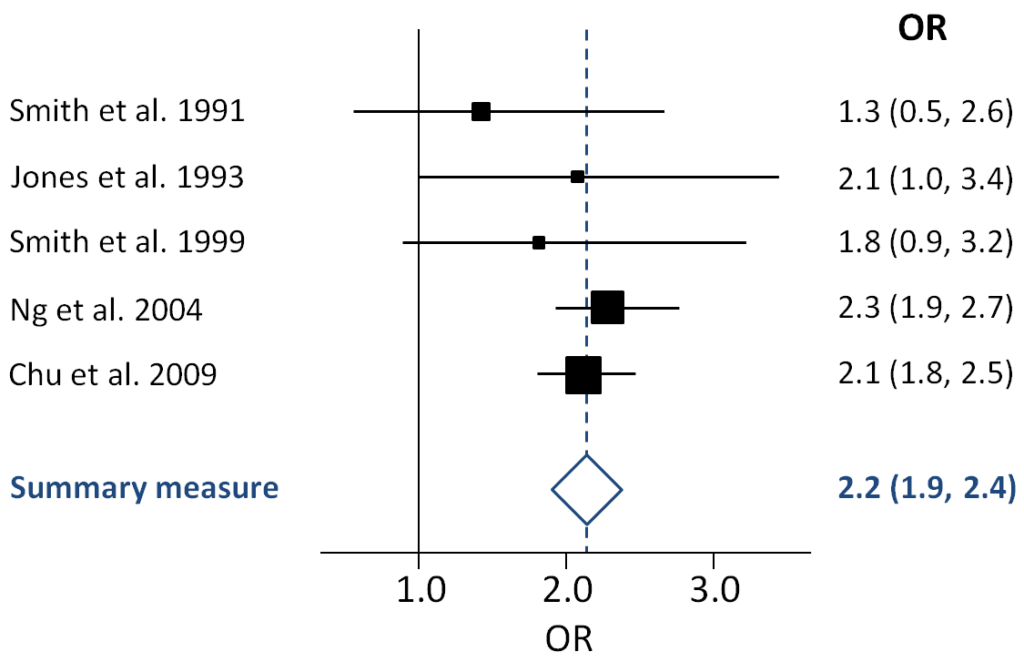

At the very top we have the Meta-Analysis and systematic reviews. These are considered secondary sources of information and strong sources of evidence because they aggregate and summarize multiple studies, through a systematic method, into a single report.

In determining the strength of evidence within research; meta analysis and systematic reviews sit comfortably at the top.

In particular, for a meta-analysis, the pooling of all the studies done on a particular topic produces, in a way, a super study that can determine if there is a true effect. They are often used in confirming current practices, guiding decision making and in informing future research.

Although the gold standard, they are limited in their scope to answer more general questions. This is due to the fact that they rely on the availability of research that already exists.

Furthermore the quality of a systematic review and meta analysis is only as reliable as the primary research they identified and included. Often, for niche topics there is very little primary research available, which limits the potential of the review to produce a conclusive report.

Similarly, systematic reviews tend to be very specific in its research question and due to this; they often lack generalizability and so can not be applicable to alternative questions of a similar nature.

Randomised Control Trials

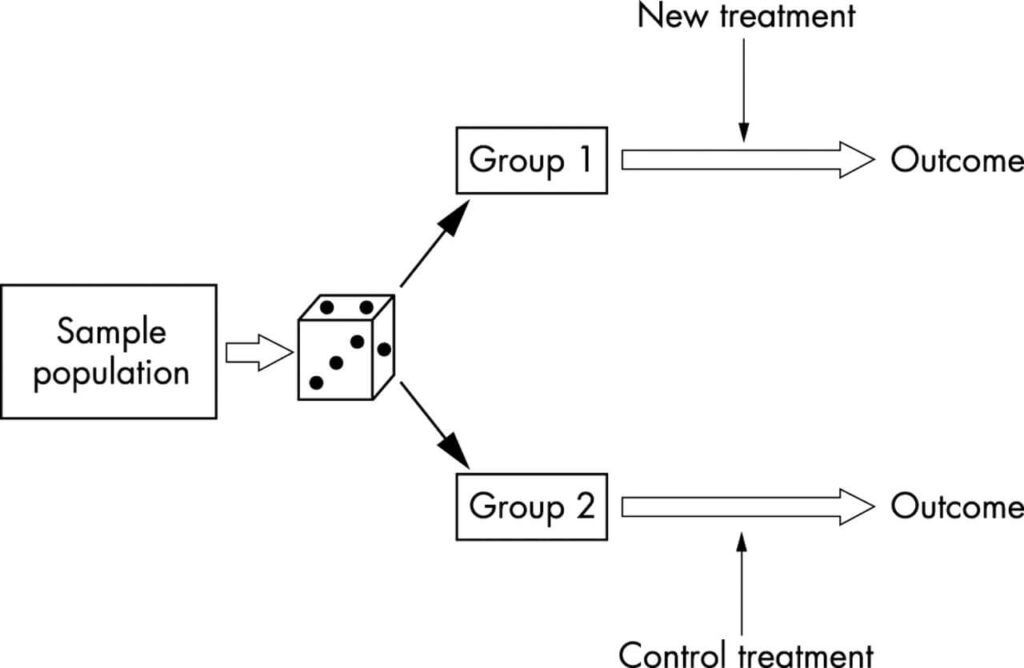

Designing a research project: randomised controlled trials and their principles

Emergency Medicine Journal 2003;20:164-168.

Randomized control trials (RCTs) are next and they are considered a very good source of primary evidence. RCTs are a primary source of evidence and are set apart from meta analyses and systematic reviews because they are a single report of one research study.

When learning how to determine the strength of evidence within research, consider an RCT a very good source of evidence because it has the least probability of systematic bias. The process of randomization provides the best internal validity and so is considered the gold standard of primary evidence.

The limitations of RCTs are to do with cost and feasibility. They are not easy to develop and implement primarily because they require a very controlled environment where almost every variable is accounted for. When it comes to nutritional research, RCTs are particularly challenging due to the latency between exposure and outcomes.

Often when we see RCTs being published, it’s easy to assume that it’s the perfect source of evidence, however secondary considerations must be taken into account, including but not limited to scope, sample size, population characteristics and so on. Even when faced with the best primary study design, good researchers know to scratch beneath the surface.

Cohort Studies:

Following on from the RCT’s we have cohort studies, which is essentially an observational study that follows research participants over a prolonged period of time. Often the period of time can extend to several decades. Cohort studies typically follow participants with shared characteristics, however the lack of control of the participants limits the capacity to infer causal relationships between exposure and outcome.

However, it can be a useful study design, particularly in inferring risk factors associated with certain diseases i.e smoking and cancer. Because of the scope to which cohort studies can be developed, often recruiting thousands of participants they can yield good information but they suffer from the same limitations as RCTs in that they are often expensive and time consuming to develop and implement.

When thinking about how to determine the strength of evidence within research, we must ask questions like “why RCTs are ranked higher in the hierarchy than a cohort study?”

Great question!

The answer is all to do with the randomization element of the RCT. The limitation with cohort studies is that it relies on natural allocation which is a source of bias and so limits the comparability between groups.

Other observational study designs

Below cohort studies are the rest of the observational study designs such as case-control studies, cross sectional studies and case series studies which all come with their respective pros and cons.

The fundamental aspects of these kinds of studies are that they are lower grade observational study designs and, although they can be valid sources of information and can be used to infer relationships between exposure and outcome; they lack the power to infer causal relationships.

It is also very important to note, in some contexts these observational study designs are the only applicable ones. Don’t discredit the study just because it’s lower in the hierarchy.They still yield valuable information and can be used to infer prevalence of diseases within a population. In summary, they can be very good sources of evidence for certain research questions.

Case Studies

At the foundation of the pyramid, you will see anecdotes, case studies and personal opinions. Although the latter would be considered a no-brainer, it is not so obvious as to why case studies are also ranked at the bottom.

Case studies can provide novel insights into specific cases, primarily when dealing with rare diseases. They are often employed as points of entry when researching novel diseases or exposures. In short they can provide researchers with a hypothesis.

Example Case Study

Let’s have a fun example. So lets say a Mr. Bruce Banner is admitted to hospital for a rare and undiagnosed condition. As a researcher I begin to ask him a series of questions. He informs me that he was exposed to lethal levels of gamma radiation not a few months prior and that ever since, he turns into a big green hulk when he’s angry.

As a researcher I now have a hypothesis, that lethal exposure of gamma radiation can cause people to turn into Hulks. The problem is that I can’t assert this as a causal relationship because it’s a single case study.

I’m also limited in my capacity to test this hypothesis ethically. An RCT is certainly out of the question because exposing people to lethal levels of gamma radiation is very likely to lead to a lot of…very unwell….research participants. So I’m limited in the assessments I can make.

I will let you, the reader, determine what study design would likely be most feasible to assess the hulk hypothesis.

Expert opinions

On the topic of expert opinions, It’s a no-brainer when it comes to grading evidence that personal opinions are at the bottom. Although there is a time and a palace for expert opinions, they do not constitute binding evidence.

Conclusions

Within the field of nutrition, as professionals, we can not provide advice based on gut feeling or opinion. Treatment is only provided based on the best available evidence that currently exists. When discussing with your clients the “best diet” let the science point the way.

Understanding the hierarchy is essential to finding and incorporating information into best evidence based practice. If you understand the hierarchy of evidence, then the complex jungle that is the realm of scientific literature opens up like a flower in bloom. If you wish to test yourself, check out our blog post on sleep and allergies and determine for yourself where you’d place this research within the pyramid.

So…do you feel confident in how to determine the strength of evidence in research?